| Description |

Several computational aspects affecting efficiency were addressed:

- Modified routine netcdf_create to set the NOFILL mode to improve performance in serial I/O applications involving a high number of CPUs:

!

! Set NOFILL mode to enhance performance.

!

IF (exit_flag.eq.NoError) THEN

status=nf90_set_fill(ncid, nf90_nofill, OldFillMode)

IF (FoundError(status, nf90_noerr, __LINE__, &

& __FILE__ // &

& ", netcdf_create")) THEN

IF (Master) WRITE (stdout,10) TRIM(ncname), TRIM(SourceFile)

exit_flag=3

ioerror=status

END IF

END IF

which changes the default behavior of prefilling variables with fill values that are overwritten when the NetCDF library writes data into the file.

Notice that the use of this feature may not be available (or even needed) in future releases of the NetCDF library.

Many thanks to Jiangtao Xu at NOAA for bringing this to my attention.

It used by the 4D-Var minimization solver (congrad.F or rpcg_lanczos.F) which is operated only by the master processor. If the number of observations is large, this array becomes a memory hug and bottleneck in fine resolution grids running on multi-node MPI applications.

Many thanks to Brian Powell for reporting this problem

- Removed the MPI broadcasting of the TLmodVal_S arrays in congrad.F and rpcg_lanczos.F since the array is unallocated in other MPI nodes.

- The reading of the array TLmodVal_S in the observation impacts and observation sensitivities (obs_sen_w4dpsas.h, obs_sen_w4dvar.h) array modes (array_modes_w4dvar.h) were modified to avoid the MPI broadcasting of its values to other nodes in the communicator group since the vatiable is unallocated:

CALL netcdf_get_fvar (ng, iTLM, LCZ(ng)%name, 'TLmodVal_S', &

& TLmodVal_S, &

& broadcast = .FALSE.) ! Master use only

IF (FoundError(exit_flag, NoError, __LINE__, &

& __FILE__)) RETURN

- Modified module procedure netcdf_get_fvar in mod_netcdf.F to include the optional broadcast argument for broadcasting or not its value to all member of the communicator in distributed-memory applications. For example, we now have:

SUBROUTINE netcdf_get_fvar_1d (ng, model, ncname, myVarName, A, &

& ncid, start, total, broadcast, &

& min_val, max_val)

!

!=======================================================================

! !

! This routine reads requested floating-point 1D-array variable from !

! specified NetCDF file. !

! !

! On Input: !

! !

! ng Nested grid number (integer) !

! model Calling model identifier (integer) !

! ncname NetCDF file name (string) !

! myVarName Variable name (string) !

! ncid NetCDF file ID (integer, OPTIONAL) !

! start Starting index where the first of the data values !

! will be read along each dimension (integer, !

! OPTIONAL) !

! total Number of data values to be read along each !

! dimension (integer, OPTIONAL) !

! broadcast Switch to broadcast read values from root to all !

! members of the communicator in distributed- !

! memory applications (logical, OPTIONAL, !

! default=TRUE) !

! !

! On Ouput: !

! !

! A Read 1D-array variable (real) !

! min_val Read data minimum value (real, OPTIONAL) !

! max_val Read data maximum value (real, OPTIONAL) !

! !

! Examples: !

! !

! CALL netcdf_get_fvar (ng, iNLM, 'file.nc', 'VarName', fvar) !

! CALL netcdf_get_fvar (ng, iNLM, 'file.nc', 'VarName', fvar(0:)) !

! CALL netcdf_get_fvar (ng, iNLM, 'file.nc', 'VarName', fvar(:,1)) !

! !

!=======================================================================

!

- Modified the fine-tuning of input parameter nRST in read_phypar.F to allow the writing of various initialization records in lagged data assimilation windows:

#if defined IS4DVAR || defined W4DPSAS || defined W4DVAR

!

! Ensure that restart file is written only at least at the end. In

! sequential data assimilation the restart file can be used as the

! first guess for the next assimilation cycle. Notice that we can

! also use the DAINAME file for such purpose. However, in lagged

! data assimilation windows, "nRST" can be set to a value less than

! "ntimes" (say, daily) and "LcycleRST" is set to false. So, there

! are several initialization record possibilities for the next

! assimilation cycle.

!

IF (nRST(ng).gt.ntimes(ng)) THEN

nRST(ng)=ntimes(ng)

END IF

#endif

Many thanks to Jiangtao Xu at NOAA for bringing this to my attention.

- If ROMS_STDOUT is activated, make sure the standard output file is only opened by the master processor in inp_par.F:

ifdef ROMS_STDOUT

!

! Change default Fortran standard out unit, so ROMS run information is

! directed to a file. This is advantageous in coupling applications to

! ROMS information separated from other models.

!

stdout=20 ! overwite Fortran default unit 6

!

IF (Master) THEN

OPEN (stdout, FILE='log.roms', FORM='formatted', &

& STATUS='replace')

END IF

#endif

It also requires to modify clock_on and clock_off critial regions in timers.F:

# ifdef ROMS_STDOUT

IF (.not.allocated(Pids)) THEN

allocate ( Pids(numthreads) )

Pids=0

END IF

Pids(MyRank+1)=proc(0,MyModel,ng)

CALL mp_collect (ng, model, numthreads, Pspv, Pids)

IF (Master) THEN

DO node=1,numthreads

WRITE (stdout,10) ' Node #', node-1, &

& ' (pid=',Pids(node),') is active.'

END DO

END IF

IF (allocated(Pids)) deallocate (Pids)

# else

WRITE (stdout,10) ' Node #', MyRank, &

& ' (pid=',proc(0,MyModel,ng),') is active.'

# endif

and

# ifdef ROMS_STDOUT

IF (.not.allocated(Tend)) THEN

allocate ( Tend(numthreads) )

Tend=0.0_r8

END IF

Tend(MyRank+1)=Cend(region,MyModel,ng)

CALL mp_collect (ng, model, numthreads, Tspv, Tend)

IF (Master) THEN

DO node=1,numthreads

WRITE (stdout,10) ' Node #', node-1, &

& ' CPU:', Tend(node)

END DO

END IF

IF (allocated(Tend)) deallocate (Tend)

# else

WRITE (stdout,10) ' Node #', MyRank, ' CPU:', &

& Cend(region,MyModel,ng)

# endif

|

| Description |

The Matlab script used to compute nested grid connectivity (grid_connections.m) was updated, so it is more robust in telescoping nesting applications.

Currently, in ROMS a telescoping grid is a refined grid (refine_factor > 0) containing a finer grid inside. Notice that under this definition the coarser grid (ng=1, refine_factor=0) is not considered a telescoping grid. The last

cascading grid is not considered a telescoping grid since it does not contains a finer grid inside. The telescoping definition is a technical one, and it facilitates the algorithm design.

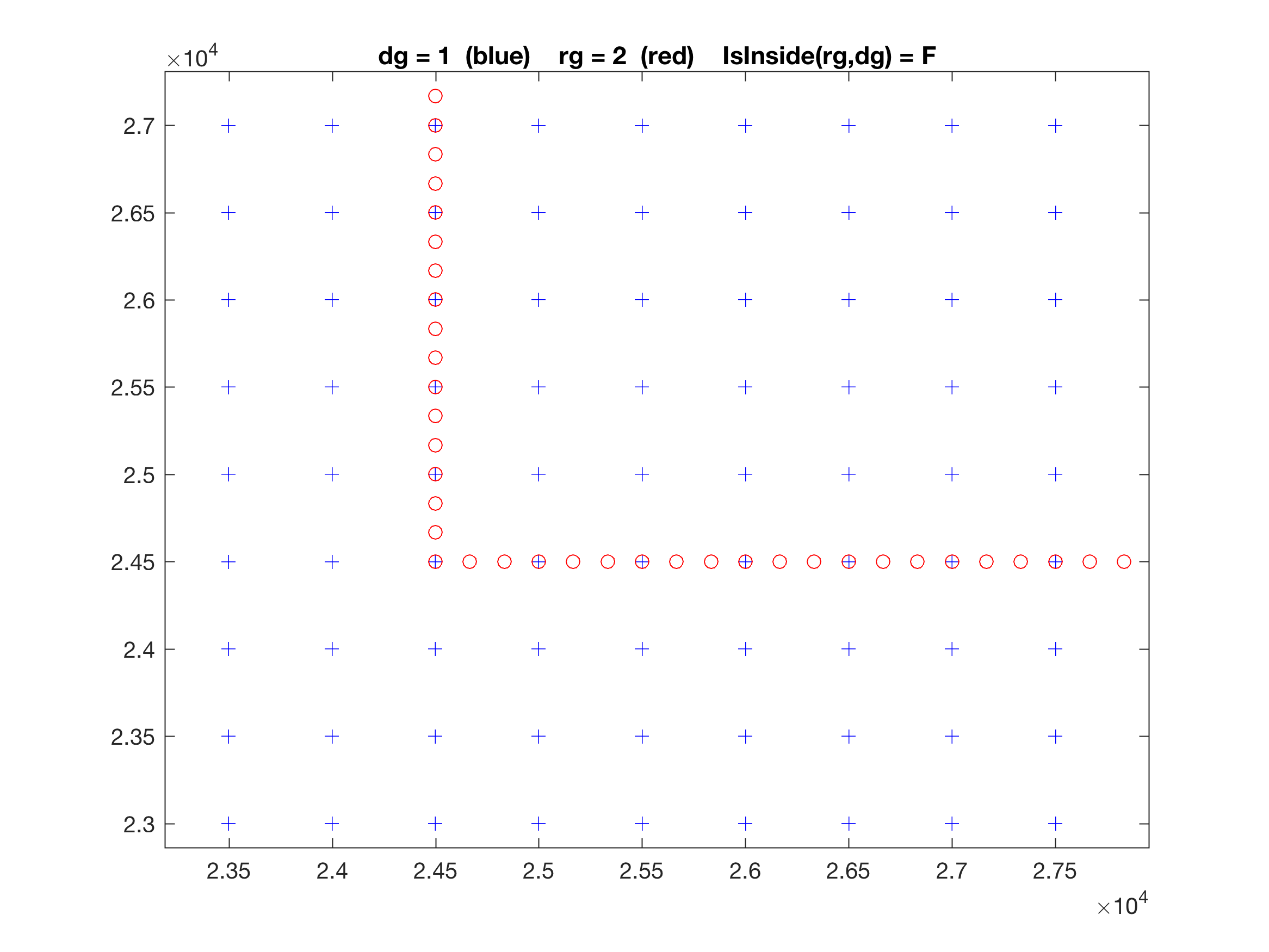

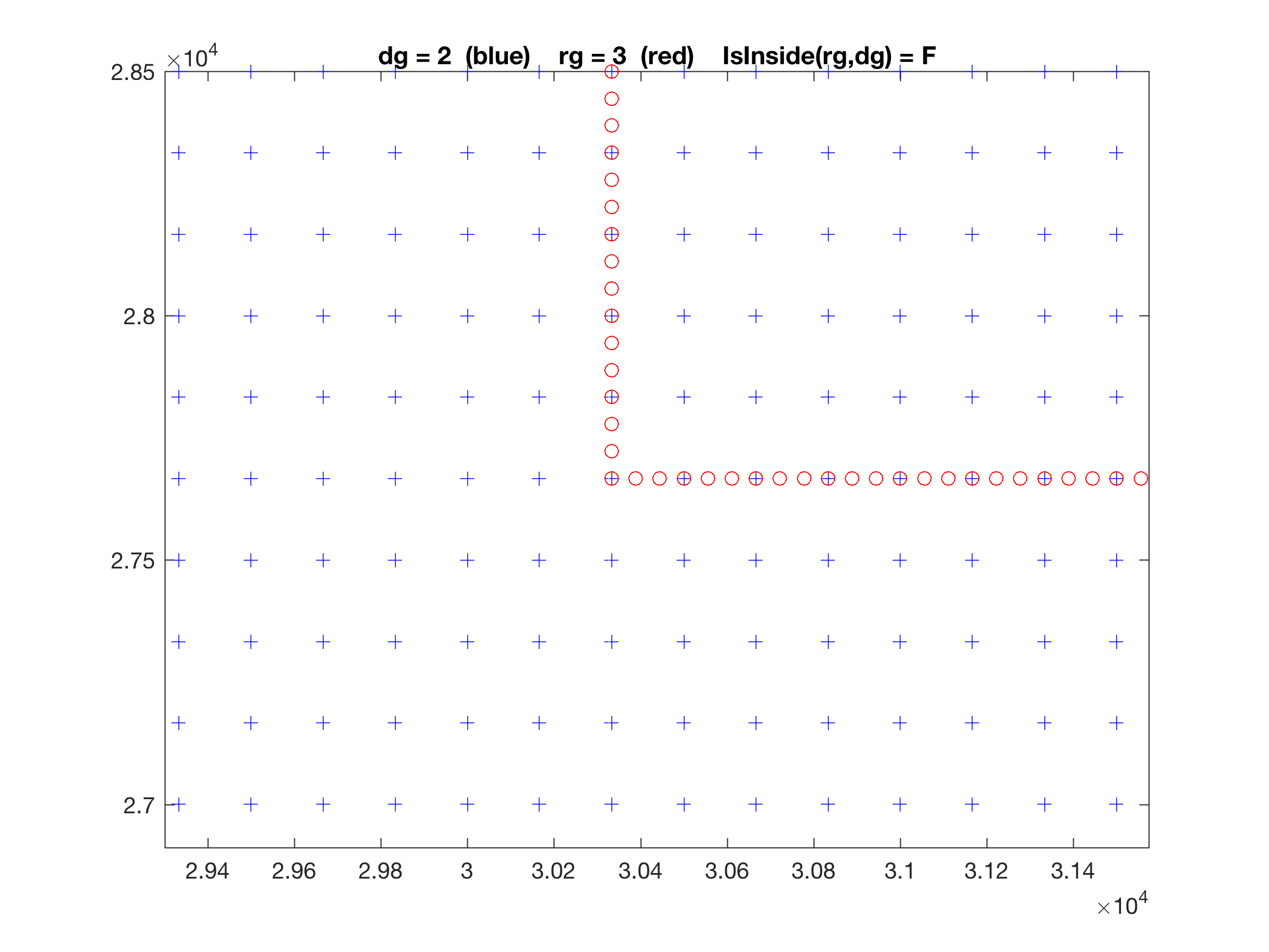

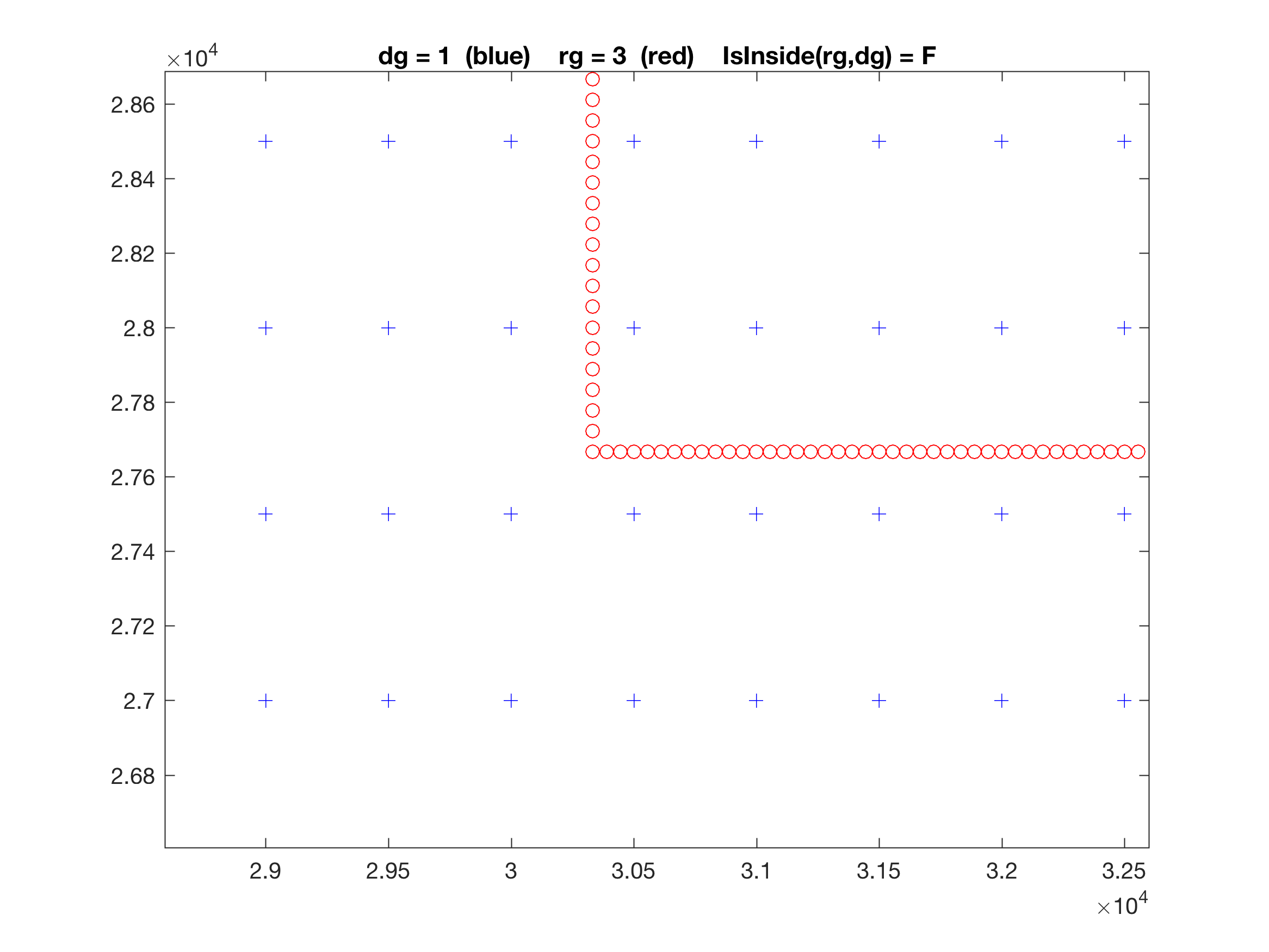

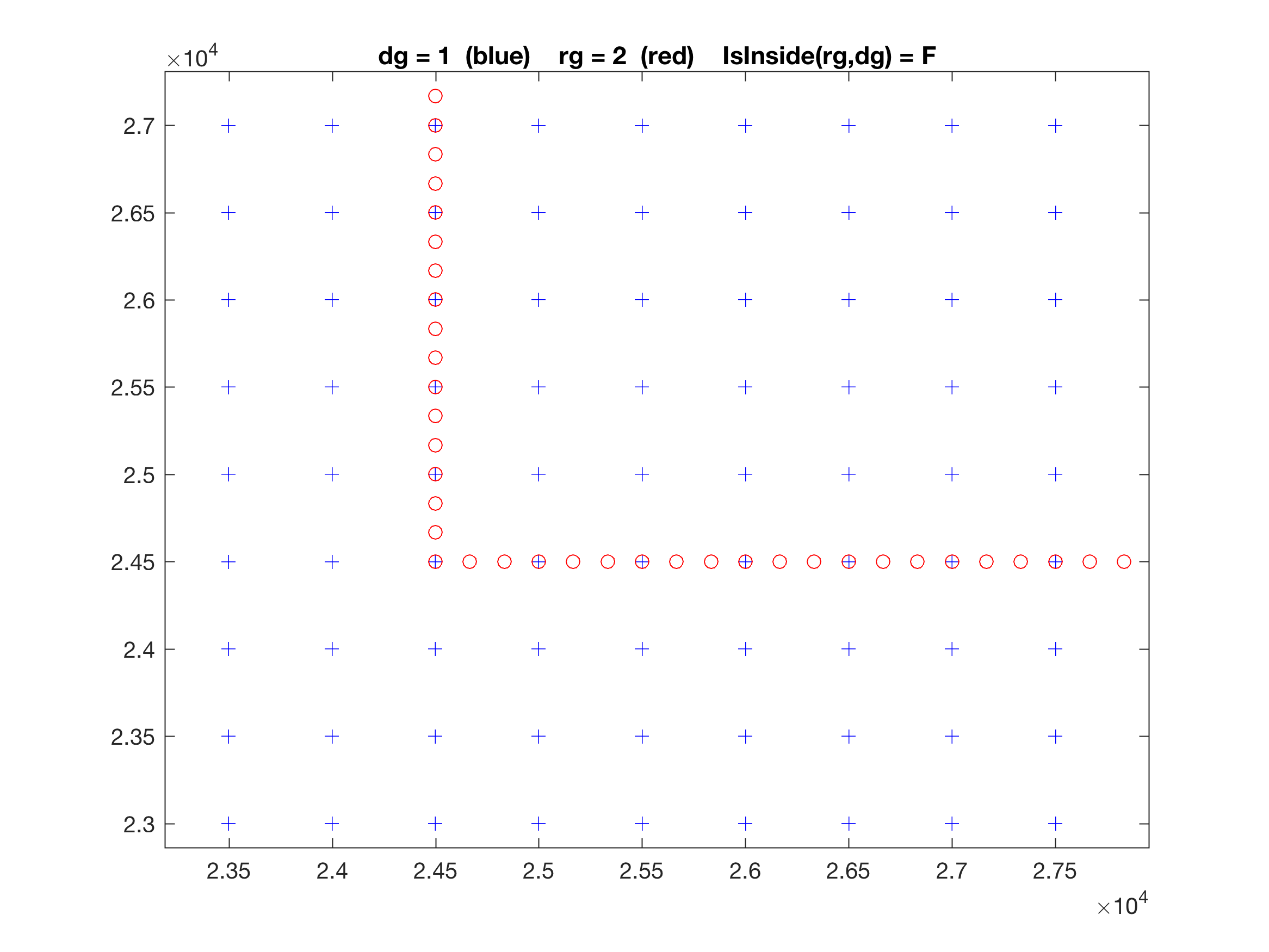

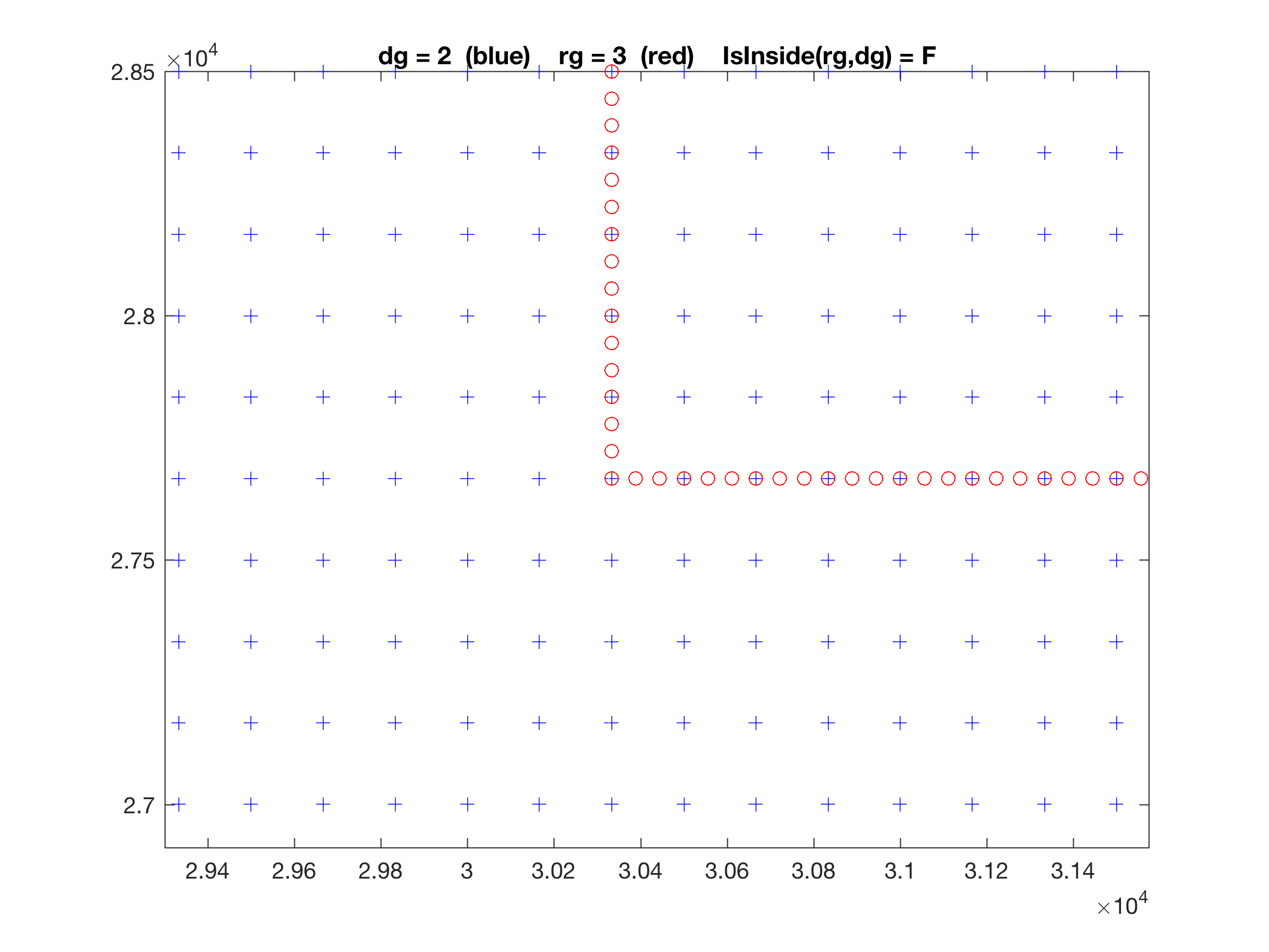

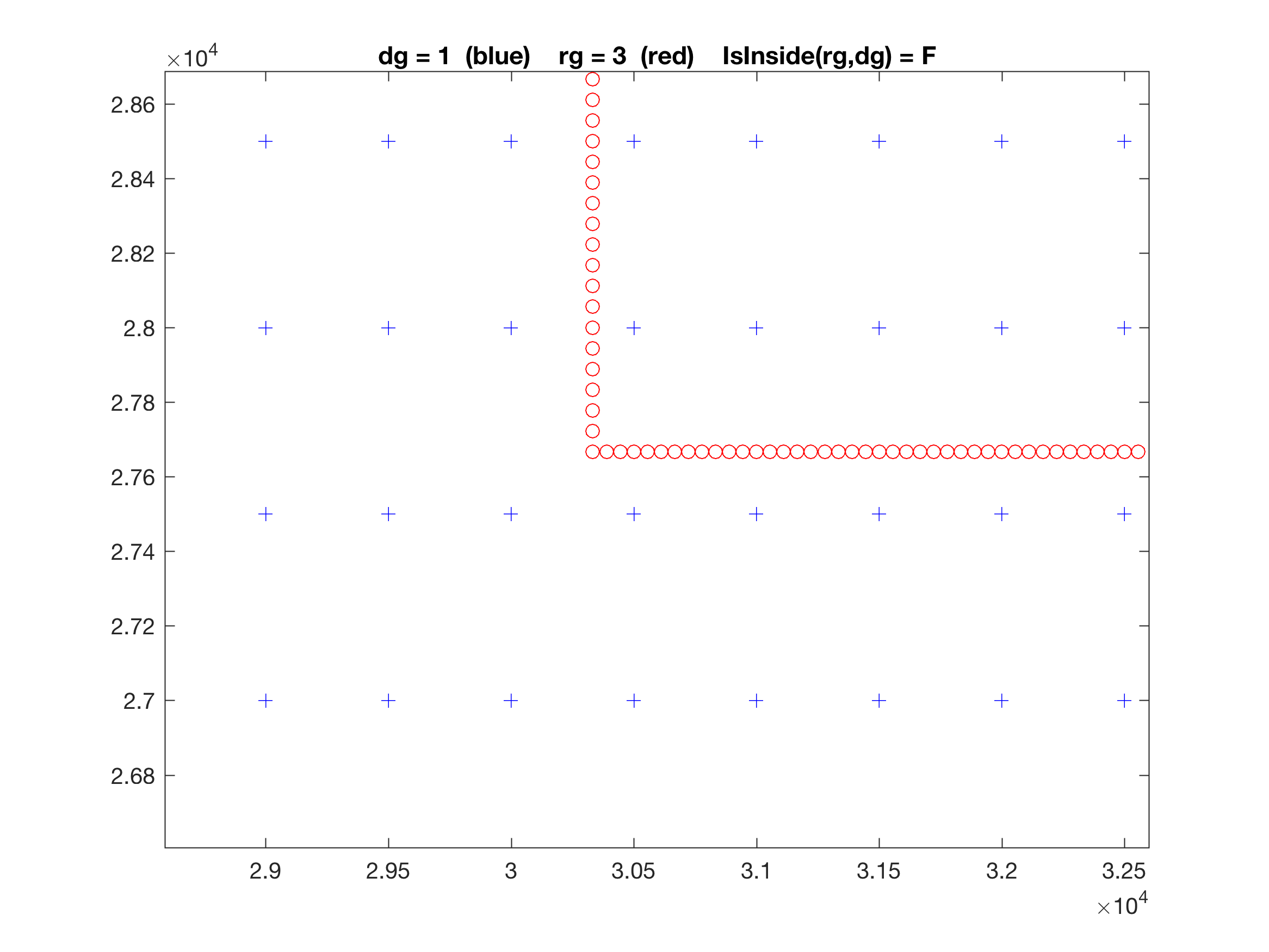

A tricky algorithm is added at the top of these function to reject indirect connectivity in telescoping refinement. Previously, only one coincident connection between grids was allowed, as shown below:

Here, we have a three nested grid application. Grid-2 is a 1:3 refinement from Grid-1 and Grid-3 is a 1:3 refinement from Grid-2. The Grid-2 is the only telescoping grid in this application according to the above definition. Notice that Grid-3 is inside both Grid-2 (directly) and Grid-1 (indirectly).

The panels show the location of the donor grid (blue plus) and receiver grid (red circle) at PSI-points. Notice that in the top panel, the Grid-2 perimeter lies in the same lines as the donor Grid-1, and some of the points are coincident, as it should be. The same occurs in the middle panel between Grid-2 and Grid-3.

However, we did not allow before to have coincident grid points between the indirect connections of Grid-1 and Grid-3. We were using that property to reject indirect connections between nested grids.

I was aware of this restriction but I forgot to go back and code a robust logic in grid_connections.m to allow coincident grids points in indirect connections for telescoping applications. The restriction is removed with this update/

I know, it is a complicated subject to discuss with words. It needs to be shown geometrically.

Many thanks to Marc Mestres and John Wilkin for bringing this problem to my attention.

|